Table of Content

Global AI spending is projected to reach $2.52 trillion by 2026, driven by rapid enterprise adoption and large-scale infrastructure investment (Gartner). Yet most enterprise teams still build AI budgets on guesswork.

Most companies get their first AI cost estimate wrong. Not by 10%. By 3x. AI development cost is not a one-time line item. It is a layered investment: compute, data pipelines, model training, integration, compliance, and ongoing operations. Miss any layer and the budget collapses.

This guide breaks down every cost variable that matters in 2026. Whether you are building an AI chatbot, recommendation engine, or enterprise automation platform, you will find the numbers here.

AI development cost refers to the total investment required to design, build, train, deploy, and maintain an artificial intelligence system. This includes data, compute, engineering labor, infrastructure, compliance, and ongoing operations.

That definition matters because most cost estimates online focus only on the build phase. Real budgets must account for the full lifecycle.

The cost to develop AI is not a single line item. It is a stack of interdependent expenses that compound as your system scales.

A narrow AI model for one task sits at one end of the spectrum. A multi-model enterprise platform with real-time inference and compliance monitoring sits at the other.

In 2026, AI development cost ranges from $15,000 for a basic proof of concept to $600,000 or more for an enterprise AI platform. Most mid-market custom builds land between $80,000 and $250,000.

These ranges depend on four primary variables: model complexity, data requirements, integration depth, and compliance obligations.

AI proof of concept cost: $15,000 – $40,000. Narrow scope, limited data, pre-trained model base.

AI MVP development cost: $40,000 – $100,000. Core functionality, one primary model, basic integrations.

Full custom AI system: $100,000 – $600,000+. Multi-model pipeline, proprietary data, production-grade infrastructure.

Enterprise AI solution cost: $250,000 – $1M+. Compliance, security, multi-tenant architecture, white-labeling.

These figures align with Gartner’s 2025 TCO frameworks, which emphasize evaluating full lifecycle cost rather than upfront development alone.

AI Development Cost Breakdown by Component

| AI Development Component | Estimated Cost Range (USD) | Notes |

| Discovery & Requirements Analysis | $5,000 – $20,000 | Scope definition, data audit, feasibility study |

| Data Collection & Preparation | $10,000 – $80,000 | Varies with data volume and quality |

| Model Architecture & Design | $15,000 – $60,000 | Custom vs. pre-trained base model |

| Model Training & Fine-Tuning | $20,000 – $200,000+ | Compute costs scale with dataset size |

| API Development & Integration | $15,000 – $50,000 | Third-party and internal system connections |

| UI/UX & Application Layer | $10,000 – $40,000 | Enterprise-grade interfaces |

| Testing, QA & Security Audit | $10,000 – $35,000 | Red-teaming, compliance checks |

| Deployment & MLOps Infrastructure | $15,000 – $60,000 | Cloud configuration, CI/CD pipelines |

| Post-Launch Maintenance (Annual) | $20,000 – $80,000+ | Model drift, retraining, monitoring |

| TOTAL RANGE | $50,000 – $600,000+ | Varies by complexity and scale |

Most AI cost estimates cover the build. They stop there. The costs that follow deployment are where budgets actually break.

Hidden costs are not edge cases. They are standard operating expenses for any production AI system. They are simply excluded from most vendor quotes because they fall after the contract is signed.

Here is what enterprise buyers consistently underestimate.

Every time your AI model processes a request, it consumes compute. At low volumes, this is negligible. At enterprise scale, it is not.

A customer-facing AI tool handling 500,000 monthly queries can generate $8,000 to $40,000 in monthly inference costs depending on model size and cloud pricing. This number does not appear in most build estimates.

AI models degrade over time. User behavior shifts, data distributions change, and model accuracy drops. Regular retraining is not optional.

Retraining cycles for a mid-scale model typically cost $3,000 to $15,000 per run. Monthly retraining on a high-volume system adds $36,000 to $180,000 per year to your operating budget.

Applications using Retrieval-Augmented Generation (RAG) or semantic search require vector databases to store and query embeddings. Pinecone, Weaviate, and similar tools carry usage-based pricing.

At enterprise data volumes, vector storage costs range from $500 to $8,000 per month. Most PoC budgets do not include this line item.

Production AI systems require continuous monitoring for performance degradation, data drift, bias, and security anomalies. Tools like Evidently AI, Arize, or custom dashboards carry their own cost.

Monitoring infrastructure typically adds $1,000 to $5,000 per month, depending on model complexity and logging depth.

Compliance is not a one-time sign-off. Regulated industries require ongoing audit logging, annual security reviews, and documentation updates as regulations evolve.

Post-launch compliance maintenance commonly runs $15,000 to $50,000 per year for HIPAA or SOC 2 environments.

McKinsey’s 2025 research consistently flags this as the most underfunded cost category in AI programs. Teams need training. Workflows need redesign. Without structured adoption investment, AI tools deliver a fraction of their potential value.

Change management and internal enablement typically adds 10 to 20 percent to the total project cost. Most vendors do not mention it.

Total hidden cost estimate for a mid-scale enterprise AI system: $60,000 to $250,000 per year, on top of the initial build cost.

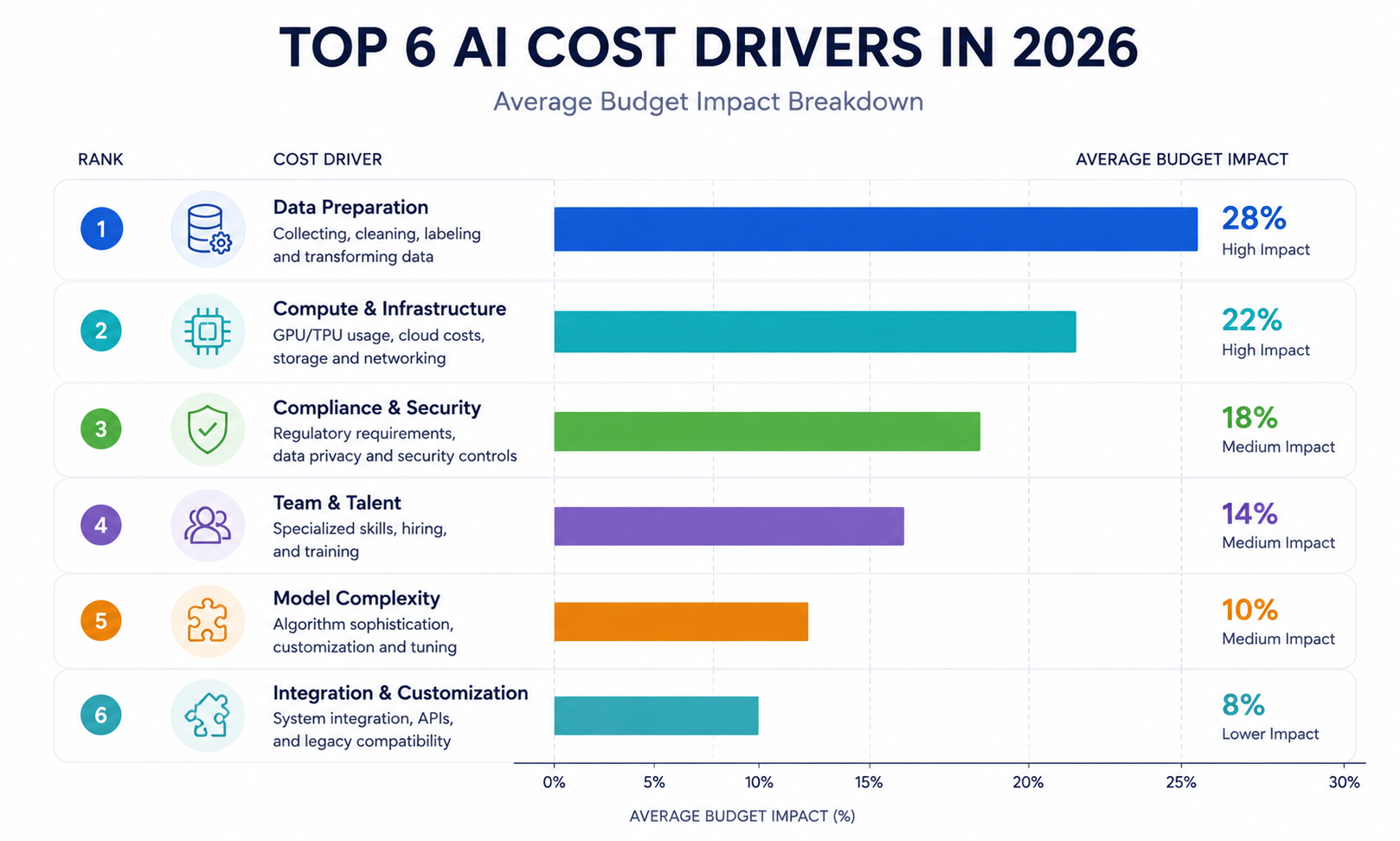

The top cost drivers in AI development are data quality and volume, model complexity, cloud compute requirements, team expertise, integration scope, and compliance obligations. Each factor can independently double your project budget.

Clean, labeled, domain-specific training data is the foundation of any AI model. It is also where budgets most frequently go over.

Raw enterprise data is rarely ready to train on. It requires cleaning, normalization, annotation, and in many cases, synthetic augmentation.

Data preparation typically accounts for 20 to 40 percent of total AI project cost. See how we built AI-powered data pipelines for enterprise clients including logistics, healthcare, and fintech systems.

A narrow classification model costs far less to build than a generative AI development system based on a large language model (LLM).

LLM fine-tuning cost ranges from $10,000 to $150,000+ depending on the base model, dataset size, and number of training cycles.

Pre-trained foundation models (GPT, Gemini, Claude) reduce training cost but introduce licensing, API, and customization constraints.

Model training and inference are compute-intensive. GPU costs have increased significantly with the rise of large-scale AI workloads.

McKinsey estimates global data center capital expenditure could reach $6.7 trillion by 2030, driven primarily by AI workloads. That infrastructure cost flows downstream to per-project compute pricing.

AI model training cost for a mid-scale custom model: $10,000 – $80,000 in cloud compute. Frontier LLMs require orders of magnitude more.

The AI outsourcing cost per hour varies significantly by geography. US-based senior ML engineers bill at $150 to $250 per hour. Offshore teams range from $40 to $90 per hour.

However, hourly rate is not the full picture. Experienced teams deliver cleaner architectures, fewer post-launch issues, and faster time-to-value.

Enterprise AI systems typically require a senior-led engineering team with expertise in ML, data engineering, and system architecture.

AI does not operate in isolation. Enterprise deployments integrate with ERP systems, CRMs, data lakes, APIs, and user-facing applications.

Each integration layer adds scoping, development, testing, and ongoing maintenance cost. Legacy systems increase this further.

Healthcare AI must meet HIPAA. Financial AI must address SOC 2 and FINRA. EU-facing systems require GDPR compliance. Each standard adds scope.

Compliance work includes data governance, audit logging, explainability frameworks, and often third-party security audits. Budget 20 to 40 percent above baseline for regulated industries.

AI vs. Traditional Software Development Cost Comparison

| Factor | Traditional Software | Custom AI Development |

| Upfront Development Cost | $30K – $150K | $50K – $600K+ |

| Timeline to Launch | 3 – 9 months | 4 – 12 months |

| Scalability | Linear cost growth | Efficient at scale with cloud optimization |

| Ongoing Maintenance | Bug fixes, updates | Model retraining, drift monitoring |

| Value Delivery | Fixed feature set | Improves with data over time |

| Compliance Overhead | Standard QA | AI-specific governance and auditing |

| ROI Horizon | 6 – 18 months | 12 – 36 months (compounding returns) |

| Expertise Required | General dev teams | ML engineers, data scientists, architects |

The table above reflects a recurring insight from Deloitte’s enterprise technology research: AI carries higher upfront complexity but delivers compounding ROI that traditional software cannot replicate.

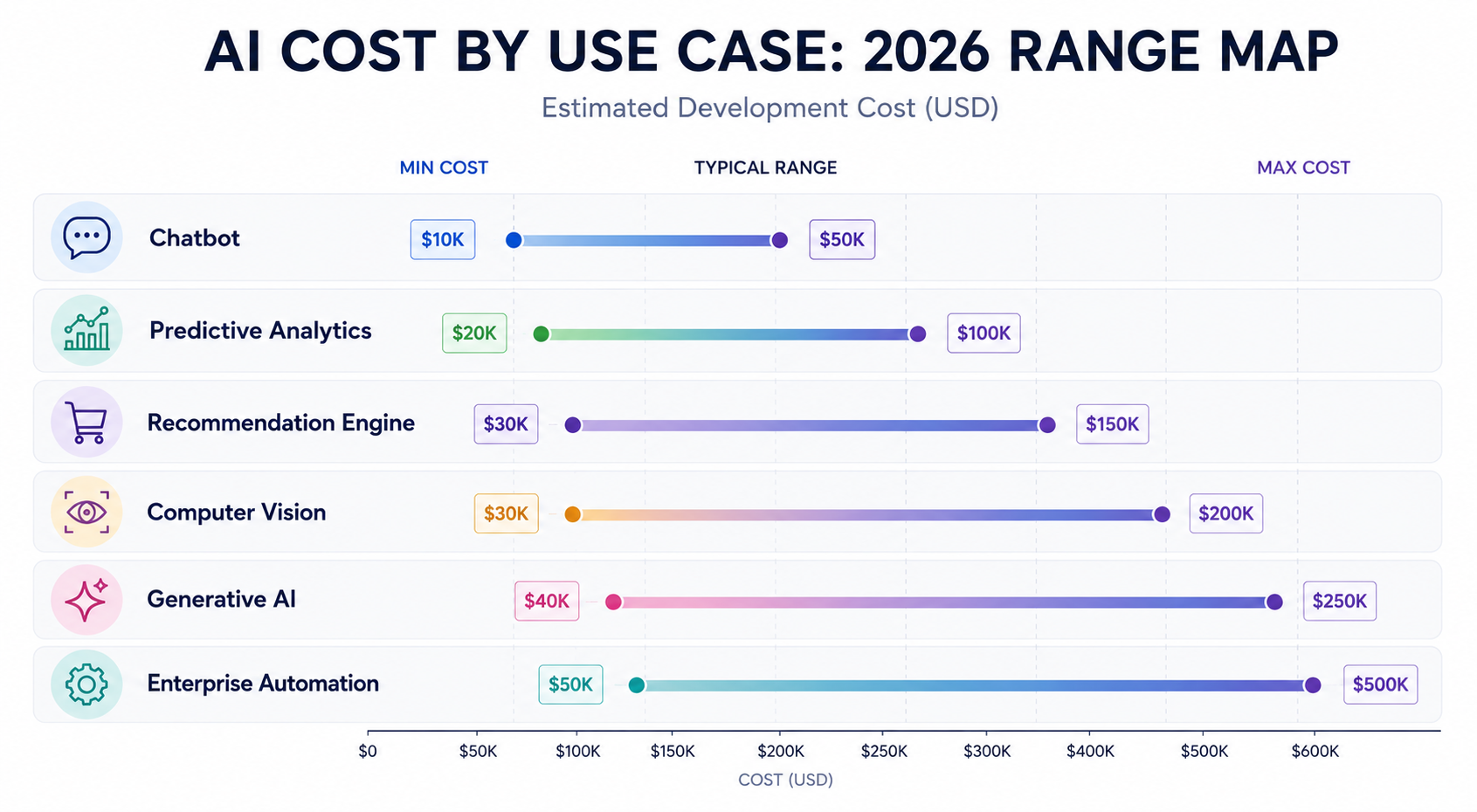

AI development cost varies significantly by use case. A chatbot, a predictive analytics engine, and a computer vision system carry very different cost profiles based on model architecture, data requirements, and deployment environment.

The cost ranges from $25,000 to $150,000. Variables include: number of intent types, language support, voice integration, CRM/ERP connection, and NLP model depth.

A basic FAQ chatbot using a pre-built NLP framework costs under $30,000. A context-aware enterprise assistant with proprietary knowledge base fine-tuning starts at $80,000.

It costs $40,000 to $200,000. Scope includes data pipeline construction, feature engineering, model selection, validation, and dashboard integration.

Demand forecasting for retail and churn prediction for SaaS are common use cases. Both require clean historical data and ongoing model recalibration.

Recommendation system AI costs $60,000 to $250,000. Real-time personalization at enterprise scale requires significant infrastructure investment.

Netflix-style recommenders involve collaborative filtering, embedding models, A/B testing pipelines, and continuous retraining. Simpler rule-based versions cost far less.

Computer vision systems for quality control, medical imaging, or retail analytics range from $80,000 to $400,000 depending on accuracy requirements and edge deployment needs.

Labeling visual training data is time-intensive. Projects requiring 100,000+ annotated images should plan for $30,000+ in data preparation alone.

The generative AI development cost for custom enterprise applications starts at $100,000. Fine-tuning a foundation model on proprietary data adds $20,000 to $150,000 depending on token volume and training cycles.

Retrieval-Augmented Generation (RAG) architectures have emerged as a cost-efficient alternative to full fine-tuning for knowledge-intensive applications.

AI Cost Drivers and Their Influence

| Cost Driver | Impact Level | Description |

| Data Volume & Quality | Very High | Poor or limited data requires expensive preprocessing and augmentation |

| Model Complexity | Very High | LLMs and multimodal models cost more to train and deploy than narrow ML models |

| Cloud Compute & GPU Hours | High | Training on H100 clusters can cost $3-$30/hr; inference at scale multiplies cost |

| Team Location & Expertise | High | US-based ML engineers: $150-$250/hr; offshore: $40-$90/hr |

| Compliance Requirements | High | HIPAA, SOC 2, GDPR compliance adds 20-40% to project scope |

| Integration Complexity | Medium-High | Legacy system APIs, ERP integrations drive up development hours |

| Real-Time vs. Batch Processing | Medium | Real-time inference requires always-on infrastructure; batch is far cheaper |

| Number of AI Models | Medium | Multi-model pipelines increase orchestration and testing costs |

| Post-Launch Retraining Frequency | Medium | Monthly retraining adds $5K-$30K/year depending on scale |

This is the decision most enterprise teams face before any development begins. The answer depends on usage volume, data sensitivity, and how much control you need over the model.

Neither option is universally cheaper. The wrong choice for your scale will cost significantly more over a 24-month period than the right one would have upfront.

AI-as-a-Service (AIaaS) means building on top of existing foundation models through APIs. OpenAI, Anthropic, Google, and Cohere all offer commercial APIs that let you call pre-trained models without training your own.

Upfront cost is low. Integration typically runs $15,000 to $60,000. There is no training cost, no GPU infrastructure, and no ML team required to launch.

API pricing is usage-based. You pay per token processed, per query answered, per image analyzed.

At low volume, this is cost-effective. At enterprise scale, it reverses fast.

A system processing 2 million tokens per day on GPT-4 class models can generate $18,000 to $55,000 in monthly API fees. At that run rate, a custom fine-tuned model typically pays back its build cost within 9 to 14 months.

| Approach | Upfront Cost | Monthly Operating Cost at Scale | Control | Data Privacy |

| AIaaS (API-based) | $15K–$60K | $5K–$55K+ | Low | Shared infrastructure |

| Fine-tuned foundation model | $40K–$150K | $2K–$10K | Medium | Configurable |

| Fully custom model | $150K–$600K+ | $3K–$15K | Full | On-premise or private cloud |

AIaaS is the right starting point when you are validating a use case, operating at low to medium volume, or building an internal tool where data sensitivity is manageable.

It is also the right choice when speed to market outweighs long-term cost optimization. A working AIaaS product in 60 days beats a custom model in 9 months for most early-stage decisions.

Custom development makes economic and operational sense under three conditions.

First, when monthly API costs approach or exceed $10,000. The crossover point where a custom model becomes cheaper typically falls between 12 and 18 months of operation.

Second, when your data cannot leave your infrastructure. Healthcare, financial services, and defense applications often require on-premise or private cloud deployment that commercial APIs cannot support.

Third, when the model needs to perform on proprietary knowledge that general-purpose models do not have. Customer-specific document processing, industry-specific classification, and domain-specific generation all require fine-tuning or custom training.

Most production AI systems in 2026 are not purely AIaaS or fully custom. They use a foundation model as the reasoning layer and layer proprietary data and retrieval logic on top through RAG architecture.

This approach cuts fine-tuning cost by 40 to 70 percent while maintaining domain-specific performance. It is the most cost-efficient architecture for knowledge-intensive enterprise applications at mid-scale.

The following represents three types of enterprise AI engagements Code Brew Labs has structured. All details are generalized for confidentiality.

Project Name: ShipSmart™

Problem: A global logistics enterprise struggled with manual auditing and document processing for 50,000+ monthly shipping transactions. This manual bottleneck resulted in a 12% rework rate and an annual shipping spend of $1.2 million with zero visibility into carrier inefficiencies.

Solution: Code Brew Labs deployed ShipSmart™, an intelligent document processing system. The solution integrated custom OCR, NLP, and deep learning neural networks to automatically identify discrepancies, overcharges, and negotiation leverage points in real-time.

Outcome:

Related reference: Explore our enterprise AI portfolio and AI logistics capabilities

Project Name: Fins AI Ecosystem

Problem: A leading financial services firm required a high-security digital ecosystem for transaction intelligence. The platform needed to process $100K+ in daily volume while adhering to strict SOC 2 Type II and global fintech audit standards to ensure “Explainable AI” (XAI) outcomes.

Solution: Code Brew Labs built the Fins AI Ecosystem, an Agentic AI risk scoring engine featuring an immutable decision audit trail. The system utilized XAI frameworks and military-grade encryption to automate risk analysis and fraud detection within milliseconds.

Outcome:

Project Name: Nizcare

Problem: Nizcare, a global healthcare marketplace, needed to scale to 150+ countries and 10,000+ businesses. They required an AI-driven symptom checker and consultation engine capable of handling millions of interactions without per-user inference bottlenecks or HIPAA breaches.

Solution: Code Brew Labs designed a scalable RAG (Retrieval-Augmented Generation) architecture for Nizcare, integrated into a cross-platform React Native app. The system featured asynchronous inference pipelines and real-time feature stores to manage massive healthcare datasets.

The Outcome:

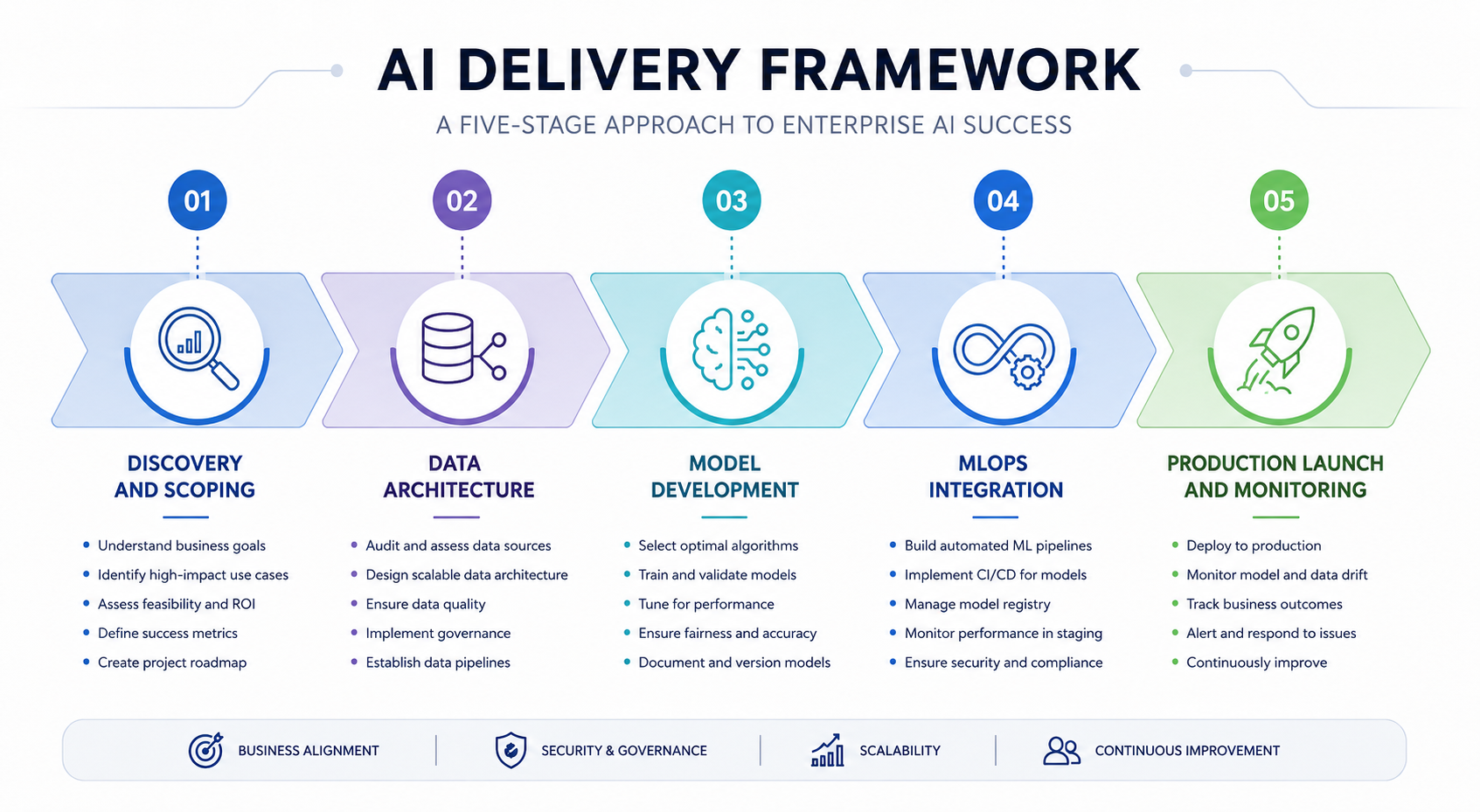

Code Brew Labs is not an order-taking development shop. It is an AI systems partner for enterprises that need defensible, scalable, and compliant AI infrastructure.

Every engagement begins with architecture review, not code. This reduces costly redesigns at scale and ensures the system is built to your data reality, not a generic template.

Many AI projects fail between prototype and production. Code Brew Labs integrates MLOps practices from the first sprint, including model versioning, automated retraining pipelines, and drift monitoring.

For regulated industries, Code Brew Labs designs compliance into the system architecture, not as a post-build layer. This applies to HIPAA, SOC 2, ISO 27001, and GDPR frameworks.

Every Code Brew Labs engagement includes a phased cost plan with explicit budget gates. You will know the AI development cost before each phase begins, not after.

Start with our AI ROI and cost estimation tool to model your project before the first conversation.

Code Brew Labs works across the complete AI stack: data engineering, model development, API layers, enterprise integrations, and user-facing applications.

To reduce AI development cost, start with a proof of concept on a narrow use case, use pre-trained foundation models where possible, prioritize cloud-native MLOps from the start, and phase compliance work in line with risk level.

IBM’s AI cost research highlights that staged investment reduces the risk of overbuilding. A $30,000 PoC can validate assumptions before committing to a $300,000 platform build.

Pre-trained foundation models from providers like Anthropic, OpenAI, and Google significantly reduce training cost when fine-tuned with domain data. Full training from scratch is rarely necessary in 2026.

RAG (Retrieval-Augmented Generation) architectures cost 40 to 70 percent less than full fine-tuning for knowledge-heavy applications, with comparable accuracy on most tasks.

Right-sizing infrastructure is another underused lever. Many teams over-provision GPU instances for inference. Spot instances, model quantization, and async processing can reduce cloud costs by 30 to 60 percent.

Building an AI app in 2026 costs between $40,000 for an MVP and $600,000+ for a full enterprise platform. The final number depends on model complexity, data requirements, compliance needs, and integration scope.

A complete AI cost breakdown includes: discovery and scoping, data collection and preparation, model architecture, training and fine-tuning, API and integration development, UI/UX, testing and security, deployment, and ongoing maintenance. Each phase carries distinct cost drivers.

AI chatbot development cost ranges from $25,000 for a basic FAQ bot to $150,000+ for an enterprise-grade conversational AI with CRM integration, multi-language support, and proprietary fine-tuning.

LLM fine-tuning costs range from $10,000 to $150,000+ depending on the base model, dataset size, and compute hours required. RAG architectures offer a cost-effective alternative at 40 to 70 percent lower cost for knowledge retrieval use cases.

AI development has higher upfront costs than traditional software, typically 2x to 4x for a comparable feature set. However, AI systems improve with data over time and can deliver compounding ROI. Traditional software ROI plateaus after initial deployment.

The main cost drivers are: poor data quality requiring extensive preprocessing, high model complexity, real-time inference infrastructure, compliance requirements (HIPAA, SOC 2, GDPR), and deep legacy system integrations. Each can independently increase project cost by 20 to 50 percent.

AI outsourcing rates vary widely. US-based senior ML engineers charge $150 to $250 per hour. Eastern European specialists range from $80 to $120 per hour. Offshore teams in South and Southeast Asia charge $40 to $90 per hour. Project outcomes depend more on team expertise than hourly rate.

According to McKinsey’s 2025 AI survey, high-performing enterprises allocate over 20% of their digital budget to AI. For a $10M technology budget, that is $2M+ directed toward AI development, infrastructure, and operations annually. Mid-market companies typically start at $100K to $500K for a first major AI system.

AI development cost in 2026 is not a fixed number. It is a function of scope, data, architecture choices, compliance obligations, and team quality.

The companies achieving the strongest AI ROI are not the ones who spent the most. They are the ones who scoped correctly, built for scale from the start, and treated AI as an ongoing capability, not a one-time project.

Gartner’s TCO frameworks make this clear: evaluating only upfront development cost leads to budget overruns and system rewrites within 18 months.

Code Brew Labs has delivered AI systems across logistics, fintech, healthcare, and SaaS – from compliance-driven risk engines to large-scale inference infrastructure. A deep understanding of diverse data environments, regulatory requirements, and production-scale workloads enables more accurate AI cost estimation before development begins.

Start narrow. Validate fast. Scale deliberately. Partner with a team that has done it across industries.